On the eve of DeepSeek V4 release: How many days do you have left to build your AI moat?

On the eve of DeepSeek V4 release: How many days do you have left to build your AI moat?

When "uniqueness" becomes the shortest-lived competitive advantage

You spent three months perfecting your AI workflow, and yesterday it was praised in a team meeting as "six months ahead of the industry." This morning, you saw a leak about DeepSeek V4: integrated text, image, and video, optimized with domestic chips, and open-source and free. You stare at the screen, your finger hovering over the mouse, unsure whether to click on the technical document.

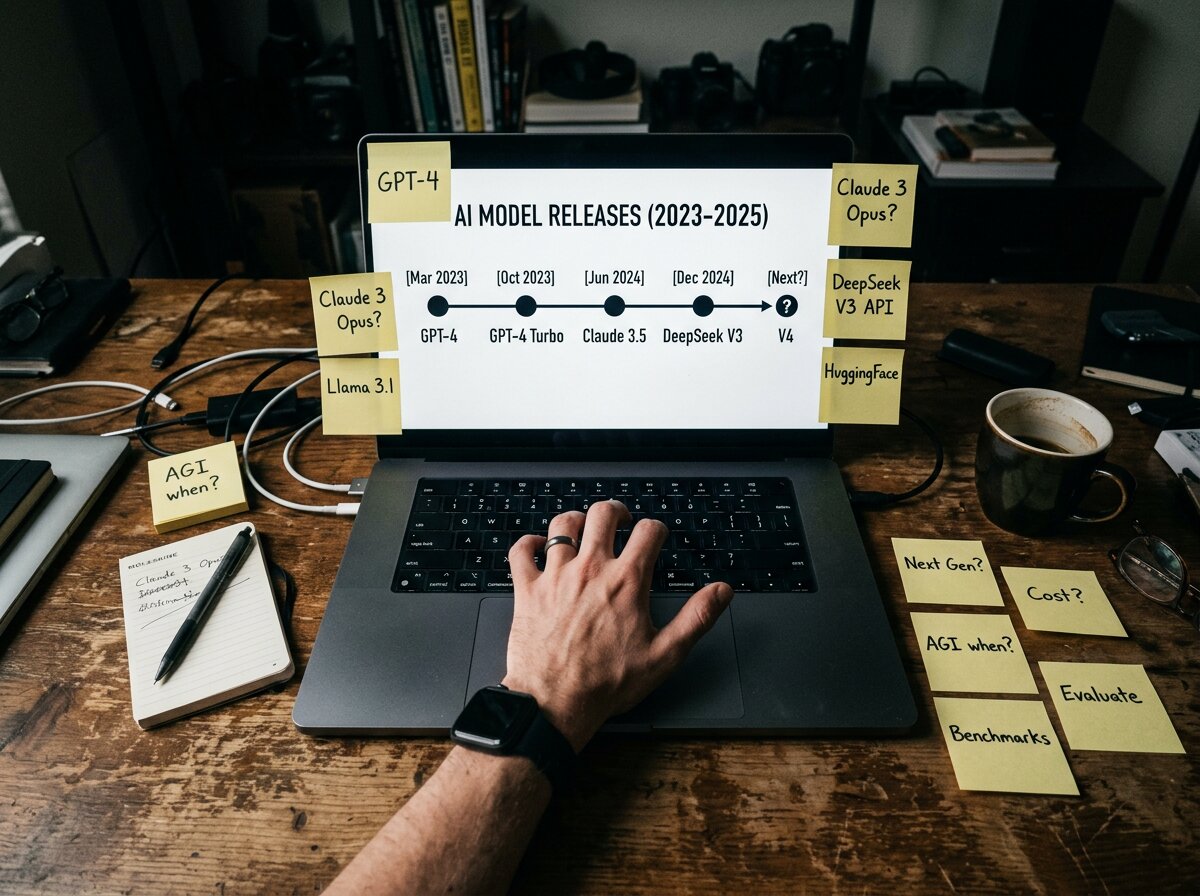

This isn't the first time.

Last February you chose GPT-4, then in May Claude 3.5 came out with three times the cost-effectiveness; in August you switched to Claude, and in December DeepSeek V3 became open source and free; this January you just migrated your team's workflow to V3, and now V4 is coming, bringing multimodal and chip optimizations. You're starting to wonder: are the choices I made only valid for 90 days?

What's even more worrying is that your clients are also reading these news articles. Last week, a potential client asked, "What model are you using? Won't it become obsolete soon?" You answered confidently at the time, but now, looking back, that confidence seems like a castle built on sand.

36Kr's report on March 10th was not unfounded. If DeepSeek V4 truly achieves unified processing of text, images, and videos, deep optimization with domestically produced chips, and continues its open-source approach, it means:

- The cost advantage will be further widened (optimization of domestically produced chips = inference costs reduced by another 30-50%).

- The boundaries of capabilities will be redefined (multimodal unification = what you currently do with three models, others can do with a single model).

- The technical barriers will be lowered again (open source = everyone can use it, and your "technical barrier" will instantly become the industry standard).

This is the true predicament of AI entrepreneurs, product managers, and operations managers in 2026: you're not racing against competitors, you're racing against the speed of model iteration. And the acceleration of model iteration far exceeds the speed of your product iteration.

The question is: If AI capabilities double every 90 days, how long can your product maintain its uniqueness?

Three layers of traps, each one devouring your moat.

You think you're making a product, but you're actually making a "model translator".

The architecture of most AI products is as follows:

用户需求 → 你的产品界面 → 调用 AI 模型 → 返回结果 → 你的产品包装It seems you've done a lot: requirements analysis, interaction design, prompt word engineering, and result optimization. But essentially, your core value lies in "translating model capabilities into a form that users can use."

This positioning has two fatal problems:

Question 1: Will model evolution directly dilute your value?

- The prompt word project you spent three months optimizing will achieve the same effect with ordinary prompt words once the new model's understanding ability improves.

- Your meticulously designed multi-step workflow, enhanced by the new model's improved reasoning capabilities, can now be completed in a single step.

- Your once-proud "AI + human review" hybrid model has become cumbersome after the new model's accuracy improved.

Question 2: Your moat is built on someone else's foundation.

When DeepSeek V3 was open-sourced, how many AI writing tools lost their pricing power overnight? When users discover, "I can write similar things directly using DeepSeek," what gives you the right to charge a SaaS subscription fee?

Even more brutally, model companies won't wait for you. OpenAI, Anthropic, and DeepSeek iterate with a major update every quarter, while your product iterates with a major version every six months. This speed difference means you're destined to always be chasing.

You think you're building barriers, but you're actually building "fragile" structures.

Many teams' strategy is to "deepen their moats":

-

Strategy A: Data Flywheel — "We have user data, and the model will become more accurate the more it's used."

- Reality : If DeepSeek V4 truly achieves multimodal unification, its training data volume will be millions of times larger than yours. Your "data advantage" is like using a water cannon against a fire truck in the face of it.

-

Strategy B: In-depth cultivation of vertical scenarios – "We focus on a specific niche and strive for excellence."

- Reality : When general-purpose models are powerful enough, the value of "vertical optimization" will be compressed. Just like when smartphones appeared, the market for professional GPS navigators, professional cameras, and professional voice recorders was swallowed up.

-

Strategy C: Workflow Integration — "We are not just AI, we integrate the entire workflow."

- Reality : This is currently the most effective strategy, but it's also the easiest to misuse. Many teams understand "workflow" as "chaining together multiple AI calls," which is still essentially acting as a "model translator." The real barrier to workflow lies in the depth of your understanding of the user's business processes , not in how many APIs you call.

Last year, you chose to deeply customize a model and wrote a lot of adaptation code. Now that a new model has been released, you find that the switching costs are high, the opportunity costs are high, and the team is exhausted—every model update is a "technical debt liquidation." You begin to realize that in the AI era, "deeply binding to a certain technology stack" is not a barrier, but a shackle.

You think you're making AI products, but you're actually making "feature phones for the AI era."

In 2007, Nokia was still optimizing the key feel, ringtone quality, and battery life of its feature phones. They did a good job, and users were satisfied. But after the iPhone appeared, all these optimizations became meaningless.

In 2026, many AI products are still optimizing features such as "prompt word template libraries," "result formatting," and "multi-model switching." Users need these features, but the question is: once AI agents mature, will these "features" become relics of the past, like the buttons on a feature phone?

DeepSeek V4's multimodal unification is not just a "feature upgrade," but also a "preview of the interaction paradigm": users no longer need to "upload images first, then enter text, and then select the output format," but can directly say "analyze user behavior in this video and generate a social media placement suggestion," and the AI will decide for itself which modality to use for processing and which format to output.

Under this new paradigm, will your current "workflow design" become "over-design"?

This is the most despairing moment: you didn't lose to your competitors, you lost to the times.

The real moat lies beyond AI capabilities.

But the story doesn't end there.

Returning to the initial question: If AI capabilities double every 90 days, how long can your product maintain its uniqueness?

The answer is: if your uniqueness is based on AI capabilities, then it can indeed only last 90 days. But if your uniqueness is based on "problems that AI can't solve," then that's a different story.

Three problems that AI cannot solve

Question 1: Users don't know what they want.

Even the most powerful DeepSeek V4 can only answer "user-asked questions." But in the real world, most users don't know what to ask.

For example, the real need of a social media manager for an overseas brand isn't "Write me 10 tweets," but rather: On which platforms are my target users active? At what times are they most likely to interact? What content are my competitors posting, and how effective are they? Which of my past content posts performed well, and why? Based on this data, what should I post today?

This isn't a matter of "generating content," it's a matter of " understanding the business, diagnosing problems, and developing strategies ." AI can assist, but it cannot replace.

SocialEcho's data analytics capabilities are not primarily about "displaying data," but rather about "helping users understand data."

- Data from multiple platforms is aggregated and presented on a single screen (follower count, impressions, and interactions broken down to the day).

- Automatically generate a "Most Popular Content Ranking" (not requiring you to manually filter it in Excel).

- Automatic monitoring and comparison of competitor accounts (not requiring you to manually take screenshots of competitor homepages).

- Supports 180 days of historical data backtracking (allowing you to see trends, not just today's numbers).

The value of these features will not diminish with the release of DeepSeek V4. Because what users need is not "stronger AI," but "clearer decision-making support."

Question 2: When AI is not present

No matter how intelligent AI becomes, it can only handle the "digital" aspects. However, many crucial moments in social media operations occur where AI cannot see: at 2 a.m., your brand is criticized by influential figures on TikTok, and the comments section begins to ferment; over the weekend, competitors launch viral marketing campaigns, and your followers start to dwindle; during holidays, a certain keyword suddenly goes viral, and you need to decide within an hour whether to follow suit.

In these moments, what you need is not "AI to write copy for me," but " someone to keep an eye on things for me and notify me immediately if anything goes wrong ."

SocialEcho's social media listening feature does exactly that :

- 24/7 real-time monitoring of 1000+ custom keywords (brand name, product name, competitors, crisis keywords)

- Mood fluctuation warning (AI sentiment analysis accuracy of 95%+, capturing concentrated outbreaks of negative emotions)

- Competitor activity monitoring (post content, posting time, interaction performance, comment sentiment)

- No KOL authorization required; automatic monitoring via public data sources.

This isn't a competition of "AI capabilities," it's a competition of " presence ." No matter how powerful DeepSeek V4 is, it can't monitor the real-time dynamics of five platforms for you.

Question 3: When AI can't make a decision

AI can generate 100 options, but it can't decide for you "which one to choose." Because decision-making requires more than just "computing power"; it also requires " taking responsibility for the consequences ."

A real-world scenario: Your brand receives a negative comment, and AI offers three suggested responses: an official apology and compensation, explaining the misunderstanding and providing evidence, or defusing the situation with humor and guiding the user to a private chat. AI can tell you the "expected effect" of each option, but it cannot tell you "which one is best for your brand." This is because it depends on your brand image, past handling methods, and risk tolerance.

SocialEcho's interactive management features are designed based on the logic of "AI assistance + human decision-making" :

- AI automatically identifies user emotions (positive reviews/complaints) and intentions (inquiries/purchases).

- Automated comment replies (handles 80% of regular interactions)

- Important operations can be set to require manual confirmation (giving you the decision-making power).

- Supports multiple custom reply templates (allowing AI to reply in your style).

The core concept of this design is: AI is responsible for efficiency, and humans are responsible for judgment. DeepSeek V4 can make AI more efficient, but it cannot replace "human judgment".

Three executable action commands

Action 1: Audit your "AI dependency"

Make a list: How many of your core functionalities directly call AI models? If DeepSeek V4 were open source tomorrow, which of your functionalities could users implement themselves? What parts of your product are "unable to be done by AI" or "poorly performed by AI"?

If more than 70% of your core value relies on AI capabilities, you need to adjust your product direction immediately.

Action 2: Shifting from "AI Products" to "Business Solutions for the AI Era"

Don't ask "What can my AI do?", ask "What do my users lack in the AI era?"

Taking social media operations as an example:

- ❌ Wrong direction: "We generate content using the latest AI models" (This is an AI capability competition)

- ✅ The right approach: "We help you solve efficiency issues throughout the entire 'content generation-publishing-monitoring-interaction-analysis' process" (this is a business solution).

SocialEcho's product logic isn't "how powerful our AI is," but rather "how we help you do less work and grow more" :

- One-click publishing : Saves 90% of publishing time, supports scheduled publishing on 7+ platforms.

- Social media monitoring : 24/7 surveillance, emotion recognition accuracy rate 95%+

- Data Analysis : 180 days of historical data, competitor comparison, offline export

- AI Automation : Automatic comment categorization and intelligent replies reduce repetitive work by 90%+

- Interaction Management : Multi-platform comment aggregation, AI-powered emotion and intent recognition, saving over 80% of manual costs.

The value of these features will not disappear due to a model update. Because what users want is not "AI," but "less time cost and higher marketing results."

Action 3: Establish a "model-independent" technical architecture

If your code is full of if model == "gpt-4", you've already lost. The correct architecture should be "User Requirements → Business Logic Layer → Model Abstraction Layer → Concrete Model Implementation". This way, when DeepSeek V4 is released, you only need to add an adapter to the abstraction layer, and users will switch seamlessly. Models are tools, not products. Build your product on "understanding the business," not "dependence on a particular model."

Will you still be there in 90 days?

Will DeepSeek V4 be released as scheduled? Will it truly achieve the unification of text, images, and videos? Will it continue to be open source? The answers to these questions will be revealed at the end of March.

But the more important question is: 90 days from now, when the next "V5" appears, will your product still have a reason to exist?

If your answer is "because we used the latest model," then you probably won't make it through the summer.

If your answer is "because we solved a problem that AI can't solve," then congratulations, you've found your true competitive advantage.

AI will become increasingly powerful, but users' anxieties will not disappear. Your value lies not in what kind of AI you use, but in what problems you solve for users.

This is the only competitive advantage that will not be destroyed by the 90-day iteration cycle in 2026.